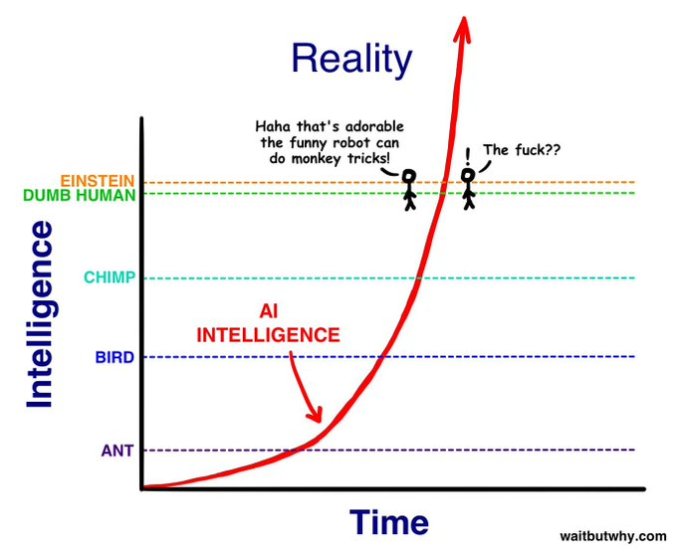

Ah, the J Curve! That’s what you see above, and it has many applications. Herman Kahn, the late futurist who was known as the smartest man in the world (is there anyone who holds that title today?) told me that the J Curve was especially valuable regarding new technologies that destroy previous concepts of what was possible. The microchip. The internet. Now, it’s AI.

Talk about fast! Just four days ago, a crude A.I. battle between Tom Cruise and Brad Pitt had Hollywood running for Xanax. Now the German Dor brothers said, “Hold our Augustiner-Bräu!” and produced this in a single day:

Soon Hollywood producers, directors and actors will be jumping off buildings like panicked stockbrokers on Black Tuesday, 1929. Or not. The smart ones will realize that they need to start making better movies. It shouldn’t surprise anyone that the typical shock and awe special effects orgies like “2012” and “San Andreus” can be made by a bot. It’s crap made for morons, stoners and people who can’t sit though “On the Waterfront.”

Let me know when the J Curve produces AI that can evoke Paul Scofield, John Hurt, Colin Redgrave, Susannah York and Wendy Hiller in this favorite Ethics Alarms scene:

…or the rest of the movie, for that matter. Until then, if then ever comes, talented actors, writers and directors have nothing to worry about. Intelligence and talent have always been weakly correlated, if at all.