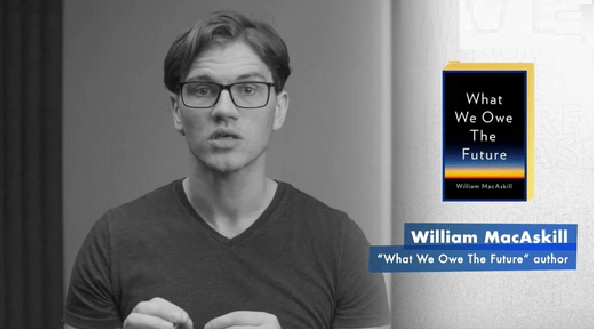

William MacAskill is a philosopher, a professor at Oxford who has a new book out for the riff-raff, “What We Owe the Future.” MacAskill is a key spokesman for the so-called “effective altruism” movement which advocates “longtermism,” a an ethical position prioritizes the moral worth of future generations and obligation of present society to protect their interests. You know where this goes, right? “Longtermists argue that humanity should be investing far more resources into mitigating the risk of future catastrophes in general and extinction events in particular,” writes New York Magazine. Got it. This is a another climate change activist group shill trying to get civilization to shut down based on speculation, scaremongering and dubious science.

Ann Althouse, who has the time on her hands to read the increasingly leftist New York Magazine so I don’t have to, flagged an interview with MacAskill, and had the wit and integrity to note that the philosopher so focused on the value of future lives never mentioned abortion nor was asked about it. Ann, who initially pronounced the Dobbs decision monstrous, does have integrity, and tracked down another recent book-promotion interview where abortion was raised. Asked whether his movement should be anti-abortion, MacAskill says no, and when pressed on his reasons (admittedly lamely), resorts to pure jargon and doubletalk, ducking the issue:

I think a few things. The first is, if you think that it’s good to have more happy, flourishing people — and I think if people have sufficiently good lives, then that’s true. I argue for that in What We Owe the Future. Then, by far, by overwhelming amounts, the focus should be on how many people might exist in the future rather than now, where perhaps you have a really good fertility program, and you can increase the world population by 10 percent. That’s like an extra billion people or so. But the loss of future life and future very good life if we go extinct — that’s being measured in the trillions upon trillions of lives. The question of just how many people should be alive today is really driven by, how would that impact the long-term flourishing of humanity? That being said, like all things considered, I think there’s this norm, there’s this idea at the moment that it’s bad to have kids because of the carbon footprint. I think that only looks at one side of the ledger. Yes, people emit carbon dioxide, and that has negative effects, but they also do a lot of good things. They innovate, and there’s an intrinsic benefit too. They have happy lives. Well, if you can bring up people to live good lives, then they will flourish, and that’s making the world better. They also might be moral changemakers, and so on. But then, the question — even suppose you think, “Okay, yes, larger family sizes are good,” the best way of achieving that — it would seem very unlikely, to me, that banning abortion, or more like very heavily restricting women’s reproductive rights, is the best way of going about that….

Oh.

HUH??

What a weasel! The issue, professor, is how a movement can extol the importance of making current sacrifices for future lives and still support the ending of those future lives in the womb for the mother’s convenience. Yet he drones on about carbon footprints and “going extinct,” avoiding the obvious contradiction entirely. Why shouldn’t the effective Altruism movement be anti-abortion? Because the movement is entirely a tool for accomplishing the Left’s climate change agenda, and those who support it (and MacAskill) are rabidly pro-abortion, that’s why.

I think that a more likely reason for his “movement” to not come out as anti-abortion is that it most likely believes that a happy, healthy future is one with significantly fewer humans in it. But if he were to say that he then would open himself up to questions about whether he supports the culling of humans, or if he thinks we should let some people die, or forced abortions and that is an area he does not want to discuss. I submit this comment having never read the book or researched his philosophy

EA proponents are still fighting the Repugnant Conclusion (https://plato.stanford.edu/entries/repugnant-conclusion/) which is silly, because the premise that B is better than A+ is by itself flawed. (The text says “hard to deny B is better than A+” but I’d rather go with “it is intuitive that B is better than A+, but this is assumed, not proven”.)

Alex

I read this and it suggests that some entity or group can establish cardinal values for all economic goods. In one example which begins by substituting one superior good for another presumes that all consumers of that good value the sequence evenly. I know Salieri would not have ranked Mozart higher than his own works so who is doing all this choosing that ultimately devolves to a singular choice of Muzak versus potatoes? The point I make is that a person substituting Hayden for Mozart does not necessarily mean Hayden is an inferior good. Superior and inferior goods are determined by relative price levels and not changing subjective temporal preferences.

Nonetheless the article makes for an interesting read.

Here’s a link to a review for the book: The Effective Altruism movement is…complicated.

Oops, didn’t post the link: https://astralcodexten.substack.com/p/book-review-what-we-owe-the-future

He sounds like a perfectly normal PHL 101 student who wants to sound erudite and sophisticated. They all go about their day philosophizing about their visions of grandeur.

I would like to ask him about the profligate spending and debt accumulation by government which will be borne by future generations. How will these future generations flourish if saddled by 30+ trillion in debt. What happens when they find out about those off balance sheet liabilities the government greated? What the Hell, apparently the current generation cannot even handle the average $17K they took out to finance their current consumption of PHL101 and similar studies courses which they demanded future generations pay off.

It despairs me that you are accurately describing about half of people who identify themselves as Internet Rationalists. There was a time when that was not the case.

It is unfortunate that MacAskill is being treated as the face of the Effective Altruism (EA) movement, because his stance about “longtermist” – and I hate that word – is not the norm among EA proponents, or even something they think about regularly.

EA has a host of problems, that can essentially be distilled to trying to derive an “ought” from an “is”. This is because EA is an offshoot of the internet Rationalist Movement, as developed in Less Wrong, Slate Star Codex, and other communities of the type.

But rather than criticize (and criticisms of EA can be easily found, I want to bring up the good side of EA. At its core, the idea is to maximize the impact of any effort to “do good” by some (strongly argued about) metric. One of the first recommendations from EA was to spend a relatively small amount of money getting mosquito nets in Africa, where the impact of having those to reduce the incidence of malaria results on a very high ROI. We’ll save for another day the argument of banning DDT.

If in the end someone informed – like Jack – is getting this guy as the face of EA, something went wrong in the reporting of the issue, or maybe the outreach of EA focused organizations. They don’t do themselves a failure by going single topic on “X risks” stuff like AI takeover, but at least most 1) are consistent with the “do most good” approach and 2) make sure their focus is not painted as THE only thing EA proponents should be doing.

PS – Just as an example of another EA focused org (which I also don’t agree with) look at the one named 80,000 Hours, which proposes to maximize the amount of money smart people can make so they can donate it to EA causes.

The author of Slate Star Codex has created a substack, Astral Codex Ten. There are a couple of posts which, IMHO, describe Effective Altruism nicely:

https://astralcodexten.substack.com/p/effective-altruism-as-a-tower-of

https://astralcodexten.substack.com/p/criticism-of-criticism-of-criticism

Been following Scott at the new place too (even as a paying customer just for the FU to the NYT). I just keep forgetting this is a slightly different internet circle, thanks for posting.

BTW, Scott once replied to some comments and links to his work I made here years ago. A delightful person as far as I can tell.

Glad to see another SSC reader!

Alex

One of the problems with the concept of “ought to”versus “is” lies with subjective valuations with respect to what is good. Economics teaches us that all things are scarce which creates a need to make choices. Some choices can be made collectively without argument but most cannot. For example how do we define “flourishing” as it relates to society? Many would argue that access to health care and sundry other produced goods and services are available. Many others would say we should eschew material well-being and focus on self-actualization thus moving all human existence to a higher plane.

I say the best thing we can do for future generations is to allow each member the ability to choose his or her path to maximize their own level of satisfaction and not burden them with our debts. If we assume all do this then the cumulative subjective valuations will be mathematically maximized.

Completely agree.

Now to defend the Effective Altruists’ (and Utilitarians’) view I would say that you need to redefine the function to maximize so that it reflects this personalized view of flourishing. And you just keep adding epicycles, but then the question is “with that many epicycles maybe isn’t it the base model that needs updating?”

I recall HG Wells “Time Machine”. The beautiful, ,happy care free Elocks above ground and the ugly underground Murlocks.