I want to express my admiration and appreciation to the Ethics Alarms comment gang for adding quality content to the blog while I was occupied in involuntary technology wars. The comments were copious and uniformly excellent, with several strong Comment of the Day candidates. In fact, I feel completely superfluous.

Here is Chris Marschner’s Comment of the Day on the post, “More Evidence That Arthur Herzog’s Novel ‘IQ 83’ Is Coming True—Beside The Fact That Bernie Sanders Is Leading The Race For The Democratic Nomination, That Is“:

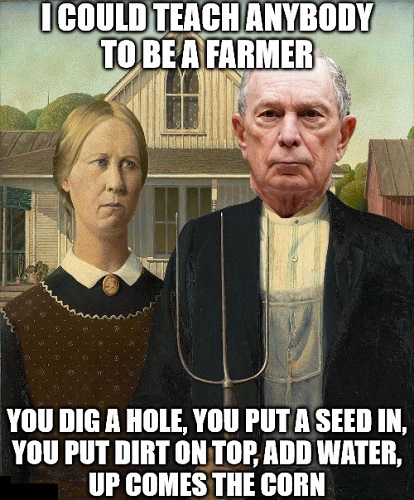

While the focus is on Michelle Wu, Bloomberg’s past comments regarding the intellectual skill sets of farming and manufacturing are beyond the pale for stupidity. He flat out stated that farming requires only the ability to dig a hole and plant a seed, while manufacturing – using a lathe – one only needs to turn on the machine and make it go the right way.

Sure, he was not running for President at the time he made those comments but for him to suggest that the analytical problem solving skills the information age requires are not present in these occupations is prima facie evidence that he either lacks analytical skills when he made such a deduction, or his cerebral library of information on occupational skill sets has too few volumes of information.

While we are closer to AI than we were when Bloomberg established his financial reporting enterprise that allowed investors to make better investing decisions, most computing still relies on if x do y, if not do something else. If the computer cannot make sense of the data it returns an error code and stops, Fundamentally the computer says, in the immortal words of the robot from Lost in Space ” That Does Not Compute”. Continue reading